Managing files in MonkeysLegion with monkeyslegion-files

Posted by admin – July 27, 2025

Uploads, storage drivers (local/S3/GCS), handy helper functions, and a small controller example you can copy-paste.

What you get

- UploadManager – validates and stores multipart/form-data uploads.

- Storage drivers – local built-in; optional S3 / Google Cloud Storage.

- Deterministic paths – date-based folders plus SHA-256 slug.

- Helpers – simple globals for quick writes/reads/signing URLs.

- .mlc config – one place to switch disks and limits.

Repo: monkeyscloud/monkeyslegion-files.

1) Install

composer require monkeyscloud/monkeyslegion-files

# optional drivers

composer require aws/aws-sdk-php # for S3

composer require google/cloud-storage # for GCSThe package autoloads helpers and registers a service provider via Composer.

2) Add config/files.mlc

Create config/files.mlc (note: MLC uses curly braces, not TOML sections):

files {

default_disk = "local"

max_bytes = 20971520

mime_allow = ["image/jpeg","image/png","image/webp","application/pdf"]

disks {

local {

root = "storage/app"

public_base_url = "files"

}

# s3 {

# bucket = "my-s3-bucket"

# region = "us-east-1"

# prefix = "uploads/"

# public_base_url = "https://my-cloudfront/"

# }

# gcs {

# project_id = "my-gcp-project"

# key_file_path = "/path/to/key.json"

# bucket = "my-gcs-bucket"

# prefix = "uploads/"

# public_base_url = "https://cdn.example.com"

# }

}

}What these mean

default_disk – which driver to bind: local, s3, or gcs.

max_bytes – per-file cap; uploads larger than this are rejected.

mime_allow – allowed MIME types (UploadManager validates).

root – local filesystem root. The provider will mkdir -p this if missing.

public_base_url

For local, set a path fragment like "files" (or "/files"). The provider will create public/files so you can serve it directly.

For CDN/full URLs, set e.g. "https://cdn.example.com". The driver will return absolute URLs.

3) Ensure the provider is registered

monkeyslegion-files ships a service provider. It must be invoked on the ContainerBuilder (not the built container). Add one line in your bootstrap:

// MonkeysLegion\Framework\HttpBootstrap::buildContainer()

use MonkeysLegion\Files\Support\ServiceProvider as FilesServiceProvider;

$b = new ContainerBuilder();

$b->addDefinitions((new AppConfig())());

if (is_file($root.'/config/app.php')) {

$b->addDefinitions(require $root.'/config/app.php');

}

/* Register the files provider on the BUILDER */

(new FilesServiceProvider())->register($b);

$container = $b->build();The provider binds:

MonkeysLegion\Files\Contracts\FileStorage → Local/S3/GCS driver

MonkeysLegion\Files\Upload\UploadManager

MonkeysLegion\Files\Contracts\FileNamer → HashPathNamer

It also creates storage/app and public/{public_base_url} when needed.

4) Create an entity (optional)

Example Media entity:

#[Entity]

class Media

{

#[Field(type: 'INT', autoIncrement: true, primaryKey: true)]

public int $id;

#[Field(type: 'string')]

public string $url;

#[Field(type: 'string', nullable: true)]

public ?string $type = null;

// getters / setters …

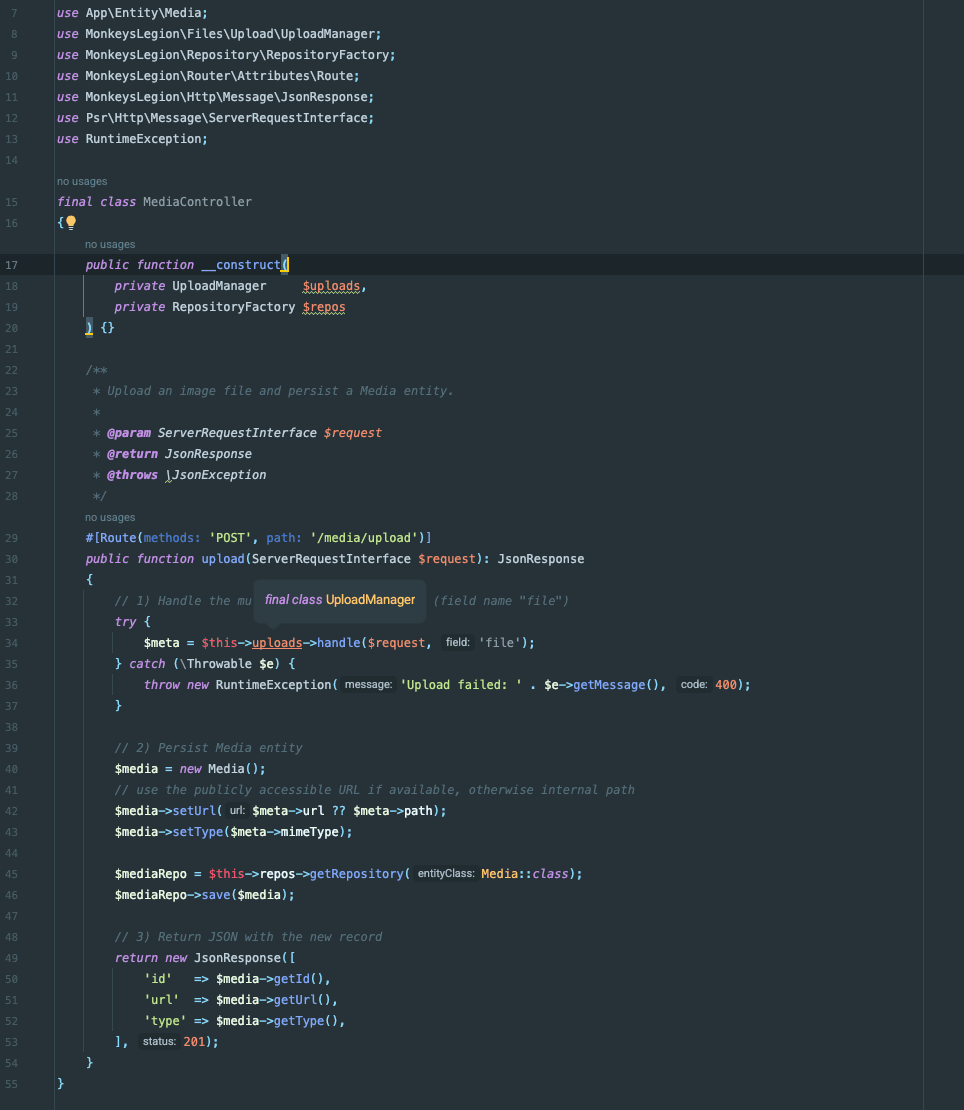

}5) Controller: handle uploads

<?php

declare(strict_types=1);

namespace App\Controller;

use App\Entity\Media;

use MonkeysLegion\Files\Upload\UploadManager;

use MonkeysLegion\Repository\RepositoryFactory;

use MonkeysLegion\Router\Attributes\Route;

use MonkeysLegion\Http\Message\JsonResponse;

use Psr\Http\Message\ServerRequestInterface;

use RuntimeException;

final class MediaController

{

public function __construct(

private UploadManager $uploads,

private RepositoryFactory $repos

) {}

#[Route(methods: 'POST', path: '/media/upload')]

public function upload(ServerRequestInterface $request): JsonResponse

{

try {

// expects field name "file"

$meta = $this->uploads->handle($request, 'file');

} catch (\Throwable $e) {

throw new RuntimeException('Upload failed: '.$e->getMessage(), 400);

}

$media = new Media();

$media->setUrl($meta->url ?? $meta->path); // prefer public URL, fallback to path

$media->setType($meta->mimeType);

$repo = $this->repos->getRepository(Media::class);

$repo->save($media);

return new JsonResponse([

'id' => $media->getId(),

'url' => $media->getUrl(),

'type' => $media->getType(),

], 201);

}

}Test with curl

curl -i -X POST http://127.0.0.1:8000/media/upload \

-H "Accept: application/json" \

-F "file=@/absolute/path/to/image.jpg;type=image/jpeg"On success:

HTTP/1.1 201 Created

{

"id": 1,

"url": "files/2025/07/26/abcd1234ef567890.jpg",

"type": "image/jpeg"

}If url is null, your disk is private; use ml_files_url() or a signed URL (below), or switch to a driver/CDN that returns public URLs.

6) Helper functions you can use anywhere

The package autoloads src/helpers.php:

// Store a PSR-7 stream and get rich metadata

$meta = ml_files_put($psr7Stream, 'photo.jpg', 'image/jpeg');

// Store a raw string quickly; returns the storage path

$path = ml_files_put_string('hello', 'text/plain', 'hello.txt');

// Store a local file; returns the storage path

$path = ml_files_put_path('/tmp/report.pdf');

// Read back as PSR-7 stream

$stream = ml_files_read_stream($path);

// Check/Remove

$exists = ml_files_exists($path);

ml_files_delete($path);

// Build a public URL (driver method or config fallback)

$url = ml_files_url($path);

// Signed URL (HMAC SHA-256)

$signed = ml_files_sign_url('/files/'.$path, 600); // 10 minutes

$isValid = ml_files_verify_signature($signed);7) Switching to S3 or GCS

S3

Install the SDK:

composer require aws/aws-sdk-phpUpdate files.mlc:

files {

default_disk = "s3"

# …

disks {

s3 {

bucket = "my-s3-bucket"

region = "us-east-1"

prefix = "uploads/"

public_base_url = "https://cdn.example.com" # optional

}

}

}Provide credentials via environment (standard AWS mechanism), or however your infra does it (instance role, etc.).

Google Cloud Storage

Install:

composer require google/cloud-storageConfig:

files {

default_disk = "gcs"

disks {

gcs {

project_id = "my-gcp-project"

key_file_path = "/absolute/path/to/key.json" # or omit to use ADC

bucket = "my-gcs-bucket"

prefix = "uploads/"

public_base_url = "https://cdn.example.com" # optional

}

}

}8) Next.js example (frontend)

const onUpload = async (file: File) => {

const fd = new FormData();

fd.append('file', file);

const res = await fetch('/media/upload', {

method: 'POST',

body: fd,

});

if (!res.ok) throw new Error(await res.text());

const data = await res.json(); // { id, url, type }

return data;

};9) Troubleshooting

Disk 'local' not configured in files.mlc

Ensure your file is wrapped like files { … } and that files.disks.local exists. The provider reads files.default_disk and files.disks.

“Syntax error … at: [disks.local]”

MLC is not TOML. Use curly braces:

disks { local { … } }, not [disks.local].

Cannot resolve constructor parameter $storage

The provider didn’t run. Make sure you call:

(new \MonkeysLegion\Files\Support\ServiceProvider())->register($builder);before $builder->build().

Call to undefined method Container::set()

Register on the ContainerBuilder, not on the built Container.

helpers.php not found

Confirm the package has autoload.files: ["src/helpers.php"]. If your vendor copy placed it at the root, either move it under src/ or change the autoload path, then composer dump-autoload -o.

Invalid upload for field 'file'

Some stacks provide a raw $_FILES array. The current UploadManager normalizes that to a PSR-7 UploadedFileInterface. Verify your client uses field name file and sends multipart/form-data.

Permissions

Make sure storage/app and public/files are writable by the PHP process.

10) Summary

Put your disk settings in config/files.mlc under a files { … } root.

Register the Files ServiceProvider on the builder.

Use UploadManager in your controllers, or call the global helpers.

Switch to S3/GCS by changing default_disk and filling the disk block.

For public serving on local, point your web server to public/files/ (or whatever you configured in public_base_url), or use the signing helper for private access.

That’s it—happy uploading! If you run into anything else, share the stack trace plus your files.mlc, and you’ll spot the fix fast.